The Horrors of AI-Driven Military Targeting, From Gaza to Iran

17.3.26

Please click on either of the ‘Donate’ or ‘Buy’ buttons below (via PayPal or Stripe) to make a donation towards the $2,500 (£2,000) I’m trying to raise to support my work as a reader-funded investigative journalist, commentator and activist over the next three months. To get links to all my work in your inbox, please also consider taking out a free or paid subscription to my new Substack newsletter.

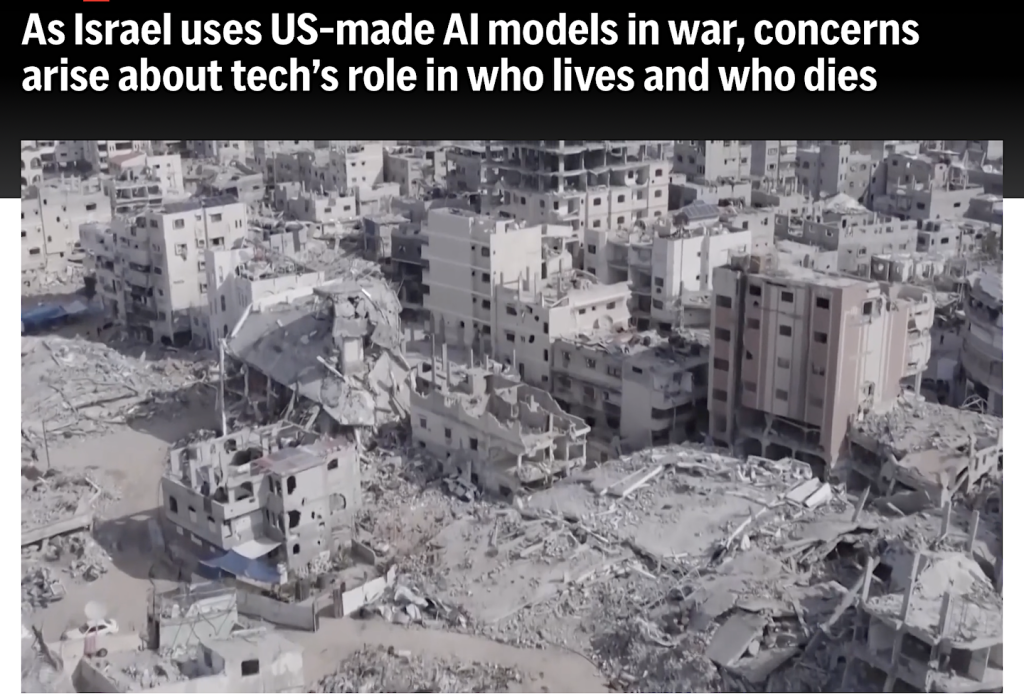

Anyone paying attention knows that, since October 7, 2023, when the State of Israel began carpet-bombing the Gaza Strip on a scale so grotesque that it can only realistically be compared to the impact of the atomic bombs dropped on Hiroshima and Nagasaki in August 1945, all sense of proportionality in warfare has been eviscerated, and has been normalized to such an extent that Israel, and its lapdog the US, are now engaged in similarly disproportionate attacks on Iran, and with Israel also extending its depravity to Lebanon.

While some of this blatant violation of international humanitarian law can be traced to Israel’s relentless contempt for any attempts to restrain its military actions, dating back decades, the truly shocking and soul-shredding intensification of its military actions over the last 29 months, in which the US has finally moved from being Israel’s main backer to being a fully-fledged partner, has primarily been facilitated through both countries’ embrace of military targeting powered by AI (artificial intelligence), which has both promised and delivered military targets on a scale that is hundreds or thousands of times faster than what was previously possible, although, crucially, with little or no human oversight to address profound problems with the accuracy of the targeting.

To provide some necessary background, proportionality in warfare seeks to minimize the loss of civilian life during military operations, and its key definition comes from the 1977 Additional Protocol to the 1949 Geneva Conventions, which sought to apply rules governing warfare in the aftermath of the horrors of the Second World War. The Additional Protocol specifically addressed the protection of civilians, and, in Article 51, established protections against indiscriminate attacks on civilian populations, providing two particular examples of attacks that “are to be considered as indiscriminate”, which have subsequently provided a benchmark for assessments of proportionality.

The first of these is “an attack by bombardment by any methods or means which treats as a single military objective a number of clearly separated and distinct military objectives located in a city, town, village or other area containing a similar concentration of civilians or civilian objects”, and the second is “an attack which may be expected to cause incidental loss of civilian life, injury to civilians, damage to civilian objects, or a combination thereof, which would be excessive in relation to the concrete and direct military advantage anticipated.”

Noticeably, however, neither Israel nor the US have ratified the protocol, and in fact, long before Israel began carpet-bombing the Gaza Strip, it had already codified a military doctrine that laid waste to the protections enshrined in the Geneva Conventions, making clear its existence as a rogue state committed to the evisceration of all laws governing its military actions.

The Dahiya Doctrine

That doctrine is the Dahiya Doctrine, named after the suburb of Beirut, which is currently under attack once more, and which, 20 years ago, was where Hezbollah, the Shiite political party and armed resistance movement, had its headquarters.

During Israel’s month-long war on Lebanon in 2006, the Dahiya Doctrine was introduced as a policy aimed at causing as much indiscriminate damage as possible to civilian areas in which militants were active so that the population would turn against them.

That, at least, was the theory that was eventually put forward in public, although, as Paul Rogers, emeritus professor of peace studies at Bradford University, explained in an article for the Guardian in December 2023, in practice the use of disproportionate force “extend[ed] to the destruction of the economy and state infrastructure with many civilian casualties, with the intention of achieving a sustained deterrent impact.” One contemporary report described how “around a thousand Lebanese civilians were killed, a third of them children. Towns and villages were reduced to rubble; bridges, sewage treatment plants, port facilities and electric power plants were crippled or destroyed.”

In 2008, when Gadi Eisenkot, then the head of the IDF’s Northern Command, publicly explained the Dahiya Doctrine, he stated, “What happened in the Dahieh quarter of Beirut in 2006 will happen in every village from which shots will be fired in the direction of Israel. We will wield disproportionate power and cause immense damage and destruction.”

Crucially, he also stated, “From our perspective, these are military bases”, adding, “Every one of the Shiite villages is a military site, with headquarters, an intelligence center, and a communications center. Dozens of rockets are buried in houses, basements, attics, and the village is run by Hezbollah men. In each village, according to its size, there are dozens of active members, the local residents, and alongside them fighters from outside, and everything is prepared and planned both for a defensive battle and for firing missiles at Israel.”

As Paul Rogers explained just two months into the Gaza genocide, the Dahiya Doctrine had been “used in Gaza during the four previous wars since 2008, especially the 2014 war.” In those four wars, as he proceeded to explain, “the IDF killed about 5,000 Palestinians, mostly civilians, for the loss of 350 of their own soldiers and about 30 civilians. In the 2014 war, Gaza’s main power station was damaged in an IDF attack and half of Gaza’s then population of 1.8 million people were affected by water shortages, hundreds of thousands lacked power and raw sewage flooded on to streets. Even earlier, after the 2008-9 war in Gaza, the UN published a fact-finding report that concluded that the Israeli strategy had been ‘designed to punish, humiliate and terrorize a civilian population.’”

Article 51 of the 1977 Optional Protocol to the Geneva Conventions explicitly prohibits the use of terror as a weapon of war, stating, unequivocally, “Acts or threats of violence, the primary purpose of which is to spread terror among the civilian population, are prohibited.” However, as noted above, Israel has nothing but contempt for international humanitarian law. Article 54 of the same protocol — “Protection of objects indispensable to the survival of the civilian population” — stipulates that “Starvation of civilians as a method of warfare is prohibited”, and yet that is exactly what Israel has also been inflicting on the Palestinians of the Gaza Strip for the last 29 months.

“A mass assassination factory”: the use of AI in Gaza since Oct. 7, 2023

While Paul Rogers was correct to highlight Israel’s post-Oct. 7 genocide in Gaza as a monstrous escalation of the Dahiya Doctrine, it was not until Israel’s +972 Magazine published, at the same time, a revelatory article, ‘A mass assassination factory’: Inside Israel’s calculated bombing of Gaza (which I wrote about here), that it became apparent how the use of AI for military targeting was, essentially, hiding the screamingly disproportionate and arbitrary illegality of the implementation of the Dahiya Doctrine behind new technology systems that cloaked mass extermination policies with a veneer of legitimacy.

As the article explained, seven current and former members of Israel’s intelligence community explained that, after Oct. 7, Israel massively “expanded authorization for bombing non-military targets”, while “loosening constraints regarding expected civilian casualties”, and also relying on “an artificial intelligence system to generate more potential targets than ever before.”

It was not Israel’s first use of AI. In 2021, Israeli intelligence officials had proudly declared that an 11-day bombing campaign against Hamas was their “First AI War”, but that was a mere skirmish compared to the unbridled, AI-facilitated mass slaughter that was to come.

The “non-military targets” identified by +972 Magazine specifically included “private residences as well as public buildings, infrastructure, and high-rise blocks”, which were defined as “power targets”, a re-branding of the Dahiya Doctrine that, as “intelligence sources who had first-hand experience with its application in Gaza in the past” explained, was “mainly intended to harm Palestinian civil society: to ‘create a shock’ that, among other things, will reverberate powerfully and ‘lead civilians to put pressure on Hamas.’”

This of course, was the core of the Dahiya Doctrine, but with Gaza, from October 2023 onwards, “because the Israeli government has files on the vast majority of potential targets in Gaza — including homes”, the sources explained that the army’s intelligence units knew, before carrying out an attack, roughly how many civilians were “certain to be killed”, also stating that, in one case, involving “an attempt to assassinate a single top Hamas military commander”, the Israeli military command “knowingly approved the killing of hundreds of Palestinian civilians.”

Chillingly, as one source explained, “Nothing happens by accident. When a 3-year-old girl is killed in a home in Gaza, it’s because someone in the army decided it wasn’t a big deal for her to be killed — that it was a price worth paying in order to hit [another] target. We are not Hamas. These are not random rockets. Everything is intentional. We know exactly how much collateral damage there is in every home.”

However, behind this targeting involving human assessment, the sources also explained that the widespread use of an AI system called “Habsora” (“The Gospel”), was generating targets “almost automatically at a rate that far exceeds what was previously possible.” One former intelligence officer memorably, and sickeningly, described it as facilitating a “mass assassination factory.”

In a follow-up article, the Guardian noted that, when AI was used in attacks on Gaza in 2021, Aviv Kochavi, then the head of the IDF, stated admiringly that, “in the past we would produce 50 targets in Gaza per year. Now, this machine produces 100 targets in a single day.” One official, however, explained to +972 Magazine how expanding the targets to alleged “junior Hamas members” — which had not happened previously — had caused so much death. “That is a lot of houses,” the official said, adding, “Hamas members who don’t really mean anything live in homes across Gaza. So they mark the home and bomb the house and kill everyone there.”

+972 Magazine noted that the sources added that military activity was not being “conducted from these targeted homes”, and cited one particularly critical source who added, “I remember thinking that it was like if [Palestinian militants] would bomb all the private residences of our families when [Israeli soldiers] go back to sleep at home on the weekend.”

Another source explained that, because a senior intelligence officer had told his officers that the goal was to “kill as many Hamas operatives as possible,” the result was that “the criteria around harming Palestinian civilians were significantly relaxed”, so that, for example, there were “cases in which we shell based on a wide cellular pinpointing of where the target is, killing civilians”, which was “often done to save time, instead of doing a little more work to get a more accurate pinpointing.”

The result of the speed with which AI could generate targets, coupled with the deliberate increase in the definition of targets regarded as militarily appropriate meant that the destruction of Gaza that we all saw and were sickened by in those first few months was, essentially, indistinguishable from carpet-bombing, the reviled policy of total destruction that, after its widespread use in the Second World War, and in the Korean War and the Vietnam War, had largely been outlawed as a war crime since the introduction of the Optional Protocol to the Geneva Conventions in 1977.

“Lavender” and “Where’s Daddy?”

In April 2024, in a follow-up article, ‘Lavender’: The AI machine directing Israel’s bombing spree in Gaza (which I wrote about here), +972 magazine exposed the existence of another AI program, “Lavender”, which was “designed to mark all suspected operatives in the military wings of Hamas and Palestinian Islamic Jihad (PIJ), including low-ranking ones, as potential bombing targets.”

Six Israeli intelligence officers, “who have all served in the army during the current war on the Gaza Strip and had first-hand involvement with the use of AI to generate targets for assassination”, explained how, “especially during the early stages of the war”, the AI program’s “influence on the military’s operations was such that they essentially treated the outputs of the AI machine ‘as if it were a human decision’”; in other words, human scrutiny was completely removed from a system which, in just a few weeks, “clocked as many as 37,000 Palestinians as suspected militants — and their homes — for possible air strikes.”

Noticeably, that figure — 37,000 — was more than the entirety of Hamas’s military membership, according to official Israeli statements.

As the article spelled out, excruciatingly, “During the early stages of the war, the army gave sweeping approval for officers to adopt Lavender’s kill lists, with no requirement to thoroughly check why the machine made those choices or to examine the raw intelligence data on which they were based. One source stated that human personnel often served only as a ‘rubber stamp’ for the machine’s decisions, adding that, normally, they would personally devote only about ’20 seconds’ to each target before authorizing a bombing — just to make sure the Lavender-marked target is male. This was despite knowing that the system makes what are regarded as ‘errors’ in approximately 10 percent of cases, and is known to occasionally mark individuals who have merely a loose connection to militant groups, or no connection at all.”

It should be apparent that even these claims about error rates may well be a massive underestimate, because, as the sources admitted, the system was used with almost no scrutiny or oversight at all.

This article also revealed the existence of “additional automated systems”, including one, repulsively called “Where’s Daddy?”, which “were used specifically to track the targeted individuals and carry out bombings when they had entered their family’s residences.” As the article explained, the army “systematically attacked the targeted individuals while they were in their homes — usually at night while their whole families were present — rather than during the course of military activity”, because, “from what they regarded as an intelligence standpoint, it was easier to locate the individuals in their private houses.”

As one source explained, “We were not interested in killing [Hamas] operatives only when they were in a military building or engaged in a military activity. On the contrary, the IDF bombed them in homes without hesitation, as a first option. It’s much easier to bomb a family’s home. The system is built to look for them in these situations.”

The sources also explained that, “when it came to targeting alleged junior militants marked by Lavender, the army preferred to only use unguided missiles, commonly known as ‘dumb’ bombs (in contrast to ‘smart’ precision bombs), which can destroy entire buildings on top of their occupants and cause significant casualties.” As one source described it, “You don’t want to waste expensive bombs on unimportant people — it’s very expensive for the country and there’s a shortage [of those bombs].”

Crucially, as the article revealed, the “Lavender” program analyzed “information collected on most of the 2.3 million residents of the Gaza Strip through a system of mass surveillance”, then assessed and ranked the likelihood of activity in the military wing of Hamas or PIJ, assigning “almost every single person in Gaza a rating from 1 to 100, expressing how likely it is that they are a militant.”

Having learned “to identify characteristics of known Hamas and PIJ operatives, whose information was fed to the machine as training data”, the program then located similar “features” amongst the general population. Those with “several different incriminating features” would “reach a high rating”, and would automatically become “a potential target for assassination.”

Alarmingly, however, as I described it at the time, “These ‘features’ might include ‘being in a Whatsapp group with a known militant, changing cell phone every few months, and changing addresses frequently’ — even though the former is no guarantee of militancy, and the latter two might well involve no militancy whatsoever. As the sources explained, the AI program ‘sometimes mistakenly flagged individuals who had communication patterns similar to known Hamas or PIJ operatives — including police and civil defense workers, militants’ relatives, residents who happened to have a name and nickname identical to that of an operative, and Gazans who used a device that once [unknowingly] belonged to a Hamas operative.’”

Furthermore, as one source explained, when “Lavender” was set up, the programmers “used the term ‘Hamas operative’ loosely,” so that “employees of the Hamas-run Internal Security Ministry, whom he does not consider to be militants,” were included. The source added that, “even if one believes these people deserve to be killed, training the system based on their communication profiles made Lavender more likely to select civilians by mistake when its algorithms were applied to the general population.”

The result of all of the above, as one of the sources explained, was that, “In practice, the principle of proportionality did not exist.”

In addition, in pursuing “high-value” targets, unheard-of rates of “collateral damage” were justified. One, early on, involved “the killing of approximately 300 civilians” in an attack aimed at one individual, a figure that appalled an international law expert at the US State Department, who told the Guardian that they had “never remotely heard” of even “a one to 15 ratio being deemed acceptable.”

Last year, the extent of Israel’s contempt for proportionality — and of the need for human oversight of AI programs — was revealed when +972 Magazine and the Guardian released their analysis of an official IDF document establishing that, according to their own analysis, 83% of those killed in Gaza since Oct. 7, 2023 were civilians, which the reporters described as “an extreme rate of slaughter rarely matched in recent decades of warfare”, even when “compared with conflicts notorious for indiscriminate killing, including the Syrian and Sudanese civil wars.”

In my own analysis, I suggested that the true total might be closer to 95%.

The involvement of a roll call of US companies

Although +972 Magazine’s investigations didn’t reveal any of the companies involved in creating Israel’s AI-driven kill programs, subsequent investigations revealed the dirty fingerprints of a roll call of US tech and AI companies.

On February 18, 2025, the Associated Press reported that, after months of investigations, three of its reporters had established that Microsoft, OpenAI, Google and Amazon were all heavily implicated in Israel’s AI targeting. 14 current and former employees of these companies spoke to the reporters, mostly on an anonymous basis “for fear of retribution”, and the reporters also spoke to “six current and former members of the Israeli army, including three reserve intelligence officers.”

Absurdly, even after +972 Magazine’s revelations, Israeli officials “insist[ed] that, even when AI plays a role, there are always several layers of humans in the loop.”

Both Microsoft and OpenAI (for which Microsoft is its largest investor) made huge profits after Oct. 7, via advanced AI models provided by OpenAI, using Microsoft’s Azure cloud platform. Microsoft also signed a three-year contract with the Israeli Ministry of Defense in 2021, which “was worth $133 million, making it the company’s second largest military customer globally after the US.”

The AP also noted that “Google and Amazon provide cloud computing and AI services to the Israeli military under ‘Project Nimbus’, a $1.2 billion contract signed in 2021 when Israel first tested out its in-house AI-powered targeting systems.”

The AP added, “The [Israeli] military has used Cisco and Dell server farms or data centers. Red Hat, an independent IBM subsidiary, also has provided cloud computing technologies, and Palantir Technologies, a Microsoft partner in US defense contracts, has a ‘strategic partnership’ providing AI systems to help Israel’s war efforts.”

As the AP article also explained, “The Israeli military uses Microsoft Azure to compile information gathered through mass surveillance, which it transcribes and translates, including phone calls, texts and audio messages”, which “can then be cross-checked with Israel’s in-house targeting systems and vice versa.”

“Typically”, the AP noted, “AI models that transcribe and translate perform best in English”, adding that “OpenAI has acknowledged that its popular AI-powered translation model Whisper, which can transcribe and translate into multiple languages including Arabic, can make up text that no one said, including adding racial commentary and violent rhetoric”, as was exposed in October 2024. Israeli officials also conceded that, whether using translating services or not, the AI programs frequently make errors, which go unnoticed unless human beings are closely monitoring the target lists.

Earlier, in April 2024, James Bamford, writing for The Nation, had focused in particular on the complicity of the US National Security Agency (NSA), which had first been exposed by the whistleblower Edward Snowden, who noted how “one of the biggest abuses” he saw while working at the NSA was how it “secretly provided Israel with raw, unredacted phone and e-mail communications between Palestinian Americans in the US and their relatives in the occupied territories”, who, as a result, were “at great risk of being targeted for arrest or worse.”

As Bamford proceeded to explain, “with Israel’s ongoing war in Gaza, critical information from NSA continues to be used by Unit 8200” — Israel’s equivalent of the NSA, which “specializes in eavesdropping, codebreaking, and cyber warfare” — to “target tens of thousands of Palestinians for death — often with US-supplied 2,000-pound bombs and other weapons.”

Bamford also explained the critical role played by Palantir, led by the morose Peter Thiel and the histrionic Alex Karp — both deeply troubling individuals — which he described as “one of the world’s most advanced data-mining companies, with ties to the CIA”, noting that “it is extremely powerful data-mining software, such as that from Palantir, that helps the IDF to select targets.”

Bamford added that, “While the company does not disclose operational details, some indications of the power and speed of its AI can be understood by examining its activities on behalf of another client at war: Ukraine.” As Karp has described it, “Palantir is responsible for most of the targeting in Ukraine”, and as was explained by Bruno Macaes, a former senior Portuguese official who was given a tour of Palantir’s London HQ in 2023, “From the moment the algorithms set to work detecting their targets until these targets are prosecuted [i.e., killed] no more than two or three minutes elapse. In the old world, the process might take six hours.”

In December 2025, it was also reported that Palantir played a key role in Israel’s targeting of Hezbollah leaders, and the barbaric Israeli pager attacks in Lebanon in September 2024, when, as Middle East Eye described it, “42 people were killed and thousands wounded, many left with life-altering injuries to the eyes, face and hands.”

The attacks used pagers and walkie-talkies, into which explosive devices had been planted at the manufacturing phase, and were purportedly aimed at Hezbollah members. However, many, if not most of those who were killed or suffered horrific injuries had no connections whatsoever to any kind of militant activity, and in any case, as UN experts explained, the attacks were a “terrifying” violation of international law.

Karp had bragged about Palantir’s involvement to the author Michael Steinberger, for his book, The Philosopher in the Valley: Alex Karp, Palantir, and the Rise of the Surveillance State, in which he wrote, “The company’s technology was deployed by the Israelis during military operations in Lebanon in 2024 that decimated Hezbollah’s top leadership. It was also used in Operation Grim Beeper, in which hundreds of Hezbollah fighters were injured and maimed when their pagers and walkie-talkies exploded (the Israelis had booby trapped the devices).”

The situation now: total warfare and total control

Fast forward to now, and the horror stories aired by +972 Magazine and other news outlets paying attention are clearly at play in the US’s first direct application of AI-driven targeting in its “war”, with Israel, against Iran.

As the FT reported on March 12, in an article entitled, “The AI-driven ‘kill chain’ transforming how the US wages war”, “Systems from Palantir and Anthropic are helping to turn torrents of battlefield data into thousands of strikes.”

Noting that, in the first four days of the war, the Pentagon said that “it struck more than 2,000 targets”, the FT’s reporters pointed out that it was using “Palantir’s Maven Smart System, which, alongside Anthropic’s Claude model, forms a real-time data analysis dashboard for operations in Iran”, marking “the first battlefield use of ‘frontier’ generative AI models, with AI tools widely used by civilians — from office workers to doctors and students — helping commanders interpret data, plan operations and provide real-time feedback during combat.”

Louis Mosley, the head of Palantir in the UK and Europe, and the grandson of Oswald Mosley, the leader of the British Union of Fascists, claimed, “The reason the frontier models are so important — the technological shift in the last year and a half — is they have moved from summarization to reasoning.” He added, as the FT described it, that “this ability of AI models to reason — or to consider a problem step by step — had enabled” what he called a “big jump in the volume of decisions and the speed at which [military personnel] can take those decisions during complex warfighting operations.”

Mosley’s claims are undoubtedly seductive for those who believe in the “reasoning” capacities of AI, but they are fundamentally unreliable, as experience, and alarming academic reports and investigations, suggest that AI systems are all fundamentally and dangerously flawed, routinely involving deceit, deception and hallucinations, and clearly requiring constant human oversight.

A clear example of this is the bombing, on the very first day of the “war” on Iran, of the Shajareh Tayyebeh elementary school in Minab, in southern Iran, in which at least 168 people were killed, most of them children, girls aged seven to 12.

As was explained in a Guardian article by Avner Gvaryahu, a DPhil researcher at the Blavatnik School of Government at University of Oxford, and a former executive director of the Israeli NGO Breaking the Silence, “The weapons were precise. Munitions experts described the targeting as ‘incredibly accurate’, each building individually struck, nothing missed. The problem was not the execution. The problem was intelligence. The school had been separated from an adjacent Revolutionary Guard base by a fence and repurposed for civilian use nearly a decade ago. Somewhere in the targeting cycle, it seems that fact was never updated.”

Speaking to the FT, a former senior defense official for the US military, who asked not to be identified, said, “The girls’ school [bombing] feels to me like the building was on a target list for years. Yet this was missed, and the question is how? A machine? A human? I would like to believe AI can point out flaws like this, in theory. Unfortunately combat is never as pristine as the technology is designed to be.”

Jessica Dorsey, a researcher in the use of AI and international humanitarian law at Utrecht University, pointed out that, “If we look at the campaign against Isis, the coalition struck around 2,000 targets in the first six months of the campaign in Iraq and Syria. Now compare that with reports about this campaign, where the same numbers of strikes [by the US] occurred within just the first four days. That illustrates the scale and speed of target execution.”

Dorsey added that, although AI “has potentially already been involved in identifying exponentially more targets than in previous wars”, the basis for these decisions is alarmingly opaque. “Those targets could have existed beforehand — or they could have been generated quickly by AI systems, creating a serious concern about how carefully these have been vetted as required by law”, she said, adding, “How do you lift the veil on a system making 37 million computations per second? How on earth would you even be able to even trace that back in any way? Are you going to meaningfully exercise context-appropriate human control and judgment over decisions that are generated by these systems?”

For Avner Gvaryahu, although “the exact role of AI in the strike on Minab has not been officially confirmed”, he was adamant that “what is known is that the targeting infrastructure in which those systems operate has no reliable mechanism for flagging when the underlying intelligence is a decade out of date.”

As he added, “Whether or not an algorithm selected this school, it was selected by a system that algorithmic targeting built. To strike 1,000 targets in the first 24 hours of the campaign in Iran, the US military relied on AI systems to generate, prioritize, and rank the target list at a speed no human team could replicate. Gaza was the laboratory. Minab is the market. The result is a world in which the most consequential targeting decisions in modern warfare are made by systems that cannot explain themselves, supplied by companies that answer to no one, in conflicts that generate no accountability and no reckoning. That is not a failure of the system. That is the system.”

What do we do now?

Where we go from here is, perhaps, the most pressing question facing those of us who are not — or not, as yet — threatened with death raining down from the skies via AI systems that are both unaccountable and unreliable, and working at a speed that increasingly demands human oversight that, the faster it gets, is increasingly abandoned.

When the internet revolution began, and particularly with the rise of social media, it seemed to me, perhaps naively, to be primarily used by the big tech companies to enrich themselves via advertising revenues, through targeting us with personalized ads. By 2015 and 2016, with the Brexit vote in the UK and the election of Donald Trump in the US, it moved towards changing people’s minds, locating vulnerable people who would fall for targeted ads supporting both Brexit and Trump, as well as ramping up division and distracting from the realities of oligarchic control through the demonization of immigrants. More recently, however, and especially since the start of Israel’s genocide in Gaza, and that malignant state’s concerted efforts to suppress all criticism of its actions as antisemitic, it has moved into even more alarming territory, focused on surveillance and control.

If the threat we pose is deemed intolerable, as the unfolding AI-driven wars are showing us, this technology, which once promised us a better world, will now kill us without a second thought. Palantir may be the most obviously evil company in the entire tech and AI sector, but all of them now pose an unprecedented threat to all of us.

On the eve of the launch of the “war” on Iran, for example, Pete Hegseth, the US’s absurd “Secretary of War”, ordered Anthropic, which has a $200 million contract with the Pentagon, to drop two particular guardrails from its Claude program — one preventing the total surveillance of all US citizens and everyone present in the US, and the other preventing the use of fully autonomous weapons without any human oversight.

In response, Claude’s CEO, Dario Amodei, refused to drop the guardrails, which prompted Hegseth to designate the company as a “supply chain risk” and to order a six-month phase-out of the use of its systems from all DOD infrastructure. However, as Shanaka Anslem Perera reported on March 16, the US military is still using Anthropic’s AI, even as other US companies — Lockheed Martin and Boeing — are “currently purging” Anthropic AI “from their commercial contracts to comply with the Hegseth order.”

Anthropic is currently challenging Hegseth in court, with Perera noting that “designating a company as a supply chain risk while simultaneously depending on its technology for active combat operations creates a constitutional and procurement paradox that no court has previously adjudicated.”

Executives from Google, Amazon, Apple and Microsoft are publicly supporting Anthropic, but, in reality, how can we trust that any of them fundamentally agree with Anthropic that, as Microsoft described it, AI tools “should not be used to conduct domestic mass surveillance or put the country in a position where autonomous machines could independently start a war”?

More probable, as Microsoft also stated, is that they are worried that the government’s behavior could cause “broad negative ramifications for the entire technology sector”; in other words, restraining them in any meaningful sense, when they all, quite clearly, believe that they should be allowed to operate without any restraint.

On March 16, Mehdi, a tech analyst on X, wrote, “I genuinely believe Palantir was never just a government contractor; it was always designed, from day 1, to embed itself so deep inside the intelligence and defense apparatus that ripping it out would be like trying to remove the nervous system from a living body.”

His words surely apply to all the big tech and AI companies, who, despite public ruptures like that between Hegseth and Anthropic, are also seeking to embed themselves into governments and government departments so thoroughly that they can’t actually be extracted, and who will continue to work, with those within government structures who support them, to redefine not only war, but also peace; a peace that will not exist unless everyone in the countries they control live lives of quiet and docile obedience, with no dissent allowed.

Our leaders are not our friends. Increasingly, they view most of us as a threat, and AI promises to deliver the means to control us like never before. We should — we must — revolt, depose all our leaders, tear all these AI systems down, and reclaim our lives for ourselves.

If we don’t, anyone who can, in any way, be regarded as a threat will face death, or unjust imprisonment, or life-threatening exclusion from a world of dystopian control and oppression, for which Israel’s genocide in Gaza provides the most alarming template.

* * * * *

Andy Worthington is a freelance investigative journalist, activist, author, photographer (of a photo-journalism project, ‘The State of London’, which ran from 2012 to 2023), film-maker and singer-songwriter (the lead singer and main songwriter for the London-based band The Four Fathers, whose music is available via Bandcamp). He is the co-founder of the Close Guantánamo campaign (see the ongoing photo campaign here) and the successful We Stand With Shaker campaign of 2014-15, and the author of The Guantánamo Files: The Stories of the 774 Detainees in America’s Illegal Prison and of two other books: Stonehenge: Celebration and Subversion and The Battle of the Beanfield. He is also the co-director (with Polly Nash) of the documentary film, “Outside the Law: Stories from Guantánamo”, which you can watch on YouTube here.

Andy Worthington is a freelance investigative journalist, activist, author, photographer (of a photo-journalism project, ‘The State of London’, which ran from 2012 to 2023), film-maker and singer-songwriter (the lead singer and main songwriter for the London-based band The Four Fathers, whose music is available via Bandcamp). He is the co-founder of the Close Guantánamo campaign (see the ongoing photo campaign here) and the successful We Stand With Shaker campaign of 2014-15, and the author of The Guantánamo Files: The Stories of the 774 Detainees in America’s Illegal Prison and of two other books: Stonehenge: Celebration and Subversion and The Battle of the Beanfield. He is also the co-director (with Polly Nash) of the documentary film, “Outside the Law: Stories from Guantánamo”, which you can watch on YouTube here.

In 2017, Andy became very involved in housing issues. He is the narrator of the documentary film, ‘Concrete Soldiers UK’, about the destruction of council estates, and the inspiring resistance of residents, he wrote a song ‘Grenfell’, in the aftermath of the entirely preventable fire in June 2017 that killed over 70 people, and, in 2018, he was part of the occupation of the Old Tidemill Wildlife Garden in Deptford, to try to prevent its destruction — and that of 16 structurally sound council flats next door — by Lewisham Council and Peabody.

Since 2019, Andy has become increasingly involved in environmental activism, recognizing that climate change poses an unprecedented threat to life on earth, and that the window for change — requiring a severe reduction in the emission of all greenhouse gases, and the dismantling of our suicidal global capitalist system — is rapidly shrinking, as tipping points are reached that are occurring much quicker than even pessimistic climate scientists expected. You can read his articles about the climate crisis here. He has also, since, October 2023, been sickened and appalled by Israel’s genocide in Gaza, and you can read his detailed coverage here.

To receive new articles in your inbox, please subscribe to Andy’s new Substack account, set up in November 2024, where he’ll be sending out a weekly newsletter, or his RSS feed — and he can also be found on Facebook (and here), Twitter and YouTube. Also see the six-part definitive Guantánamo prisoner list, The Complete Guantánamo Files, the definitive Guantánamo habeas list, and the full military commissions list.

Please also consider joining the Close Guantánamo campaign, and, if you appreciate Andy’s work, feel free to make a donation via PayPal or via Stripe.

Who's still at Guantánamo?

Who's still at Guantánamo?

28 Responses

Andy Worthington says...

When I posted this on Facebook, I wrote:

My detailed analysis of a burning topic that ought to be of huge concern to us all — the rise of AI-driven targeting in warfare, which generates military targets hundreds or thousands of times faster than human analysts, but which is both unreliable, and dependent on parameters for targeting that are overly broad, and which, crucially, are generated so fast that, to secure “results”, the essential need for significant human oversight is being ignored.

I trace the development of AI in warfare from its roots in Israel’s ongoing genocide in Gaza, as revealed through groundbreaking reports by Israel’s +972 Magazine, which showed how any sense of proportionality in wartime — avoiding the targeting of civilians in military actions, or their deaths as “collateral damage” — has been completely swept aside, along with almost all human checks on the AI’s targeting, leading to an unparalleled situation in which, according to the Israeli military, 83% of those killed in Gaza were civilians — although my own assessment is that it may be closer to 95%.

I also examine the deep involvement of US tech and AI companies in Israel’s genocide — including Microsoft, OpenAI, Google, Amazon, Anthropic and Palantir — and bring the story up to date with the US’s direct participation in the genocidal Gaza model of AI targeting in its war on Iran, with inaccurate targeting exposed on the very first day of hostilities, when an elementary school in Minab, in southern Iran, was hit, killing at least 168 people, most of them children, girls aged seven to 12.

I conclude that none of the major tech and AI companies can be trusted, because they are all, to varying degrees, embedded within our governments, and are all complicit in implementing, or seeking to implement sweeping programs of surveillance and control, which, as I describe it, “redefine not only war, but also peace; a peace that will not exist unless everyone in the countries they control live lives of quiet and docile obedience, with no dissent allowed.”

As I proceed to explain: “Our leaders are not our friends. Increasingly, they view most of us as a threat, and AI promises to deliver the means to control us like never before. We should — we must — revolt, depose all our leaders, tear all these AI systems down, and reclaim our lives for ourselves. If we don’t, anyone who can, in any way, be regarded as a threat will face death, or unjust imprisonment, or life-threatening exclusion from a world of dystopian control and oppression, for which Israel’s genocide in Gaza provides the most alarming template.”

If that sounds alarmist, I can only suggest that it really isn’t. The dystopian future has already been announced in Gaza, and there is no reason to think that those who control the weapons — whether military or AI — will stop there.

...on March 17th, 2026 at 6:53 pm

Andy Worthington says...

Please join me on Substack to get links to all my work in your inbox. Free or paid subscriptions are available, although the latter ($8/month or $2/week) are absolutely essential for a reader-funded writer like myself, and if you can help out at all it will be very greatly appreciated.

Here’s my new post, promoting my article above: https://andyworthington.substack.com/p/the-horrors-of-ai-driven-military

...on March 17th, 2026 at 10:18 pm

Andy Worthington says...

Michael Leonardi wrote:

Andy, I have been working on this very same topic this week, researching and writing. It’s lunacy and seems we had better figure out more effective ways to monkey wrench 🔧 this death cult more effectively.

...on March 17th, 2026 at 10:18 pm

Andy Worthington says...

Glad to hear that you’re working on this too, Michael. Perhaps the best we can do for now is to keep on trying to illuminate the scale of the problem, although after Sam Altman made his speech recently about how ineffective humans are, because they require 20 years of feeding before they can be exploited, I started encouraging people to, at least, boycott Chat GPT. Just today, though, my wife was telling me about how a student was using Anthropic’s Claude to organize his studies, and I realized how, bizarrely, this is the same Claude that, in the hands of the US military, is telling them who to kill in Iran.

I do genuinely think that almost every aspect of AI is deadly for us, and I hope more and more of us can work to get that message across. Palantir, of course, is a particularly evil problem, and in the UK we’re having to deal with their massive infiltration of the government, including the NHS. There’s a detailed report here: https://www.medact.org/2026/resources/briefings/briefing-palantir-fdp/

...on March 17th, 2026 at 10:19 pm

Andy Worthington says...

Kären Ahern wrote:

It is terrifying, I don’t even want to have to get a new phone. But the insanity of AI as mass murdering tools, or an individual kill … is nothing I even want to see in a horror film. I want us to go back to the old world and never stop teaching peace and compassion.

...on March 17th, 2026 at 10:29 pm

Andy Worthington says...

Imagine how I feel, Kären. I just got a phone for the first time! 😉 I think what we all need to do first of all is to mobilize to educate people about the unparalleled perils of AI – and I hope my article helps – and also to encourage people not to use it. We’re some way off having to ditch our devices, and especially our phones, but it’s worth bearing in mind that this is a future probability if we don’t succeed in taking down these monsters with their enthusiasm for various forms of enslavement.

Like you though, I do find myself regularly reflecting on the world of 30 years ago, and how free we were as individuals, even within our capitalist systems. We need to find ways to recapture that. Going out without phones to remote places, for example. We aren’t meant to live in prisons, whether real or virtual.

...on March 17th, 2026 at 10:29 pm

Andy Worthington says...

Kären Ahern wrote:

Agree. Also, good for you being phoneless for so long.

...on March 18th, 2026 at 9:00 am

Andy Worthington says...

Not having a phone was infuriating for my wife and son, Kären, which I understand, but I see it mostly as a replacement for a landline, and not as a tracking device when I’m out and about, and particularly not in relation to any kind of protests, when I will continue to take photos with a camera that’s not connected to the internet. I think that’s a useful precaution that anyone exercising their alleged First Amendment rights – or alleged freedom of speech and assembly in the UK – should be aware of, because the trajectory of the future seems pretty clear to me: Palantir, or other surveillance organizations, will continue to accrue information about us, and will eventually divide us into threats and non-threats, at which point life may start to become more difficult. We all need to start thinking of a future in which we gather in numbers without any tracking devices.

...on March 18th, 2026 at 9:01 am

Andy Worthington says...

Al Glatkowski wrote:

Yes, it is a serious problem, but AI is like Pandora’s Box, in my opinion, and it’s already out, big time, and the remnant remaining inside has definitely shrunk. I know several of my friends have already played around with it. But I have chosen not to do so until I have more information on it. But, like I said, it’s loose, and its whole purpose, from my understanding so far, is to build its self upon whatever exists and what it’s learning. What “intelligence” whether animal or machine would not do so?

...on March 18th, 2026 at 9:02 am

Andy Worthington says...

Good to hear from you, Al. It’s certainly out there, but its primary military and surveillance purpose as a harvester and analyst for vast amounts of raw data on who we are and where we are and what can be inferred from the data about what we think – from Gaza to Iran to the streets of the US – isn’t something that I think we should be passive about.

The billionaire Larry Ellison of Oracle, whose brother David is currently hoovering up as much US media and social media as possible, is on record for endorsing AI-driven surveillance to ensure that “citizens will be on their best behavior.” Obviously, any concept of “best behavior” is not a given and its parameters have to be inferred – and clearly ought to enshrine First Amendment rights – but that’s not what I’d conclude when the person proposing it is a fanatical supporter of the only country on earth that now actively exists solely to commit genocidal atrocities wherever and whenever it feels like it, and that continues to work assiduously to try to ensure that no one anywhere is allowed to criticize its actions, and that, if they do, there should be dire consequences.

https://www.businessinsider.com/larry-ellison-ai-surveillance-keep-citizens-on-their-best-behavior-2024-9

...on March 18th, 2026 at 9:03 am

Andy Worthington says...

Lizzy Arizona wrote:

It’s Gaza, it’s here, it’s now, it seems to be preferred in the DC war room.

...on March 18th, 2026 at 9:04 am

Andy Worthington says...

Yes, that’s the worry, Lizzy. The DOD now seems very much to be engaging in its first “war” using the template established by Israel in Gaza and Lebanon, with, of course, a significant overlap between both countries via the mainly US tech and AI companies who have taken over targeting from military and intel analysts. The power of AI-driven militarized systems to wage autonomous warfare – which Anthropic’s CEO was recently resisting handing control of to Pete Hegseth – already seems to be a reality, as I hope I showed in my article. Human oversight is already almost entirely optional.

...on March 18th, 2026 at 9:05 am

Andy Worthington says...

Fiona Russell Powell wrote:

Larry Ellison talked about the mass surveillance system at least a year ago, Andy. To help the police and to help citizens to be good citizens. Now it’s closer to becoming a reality. Robocop and Metacorp weren’t fiction, after all.

...on March 18th, 2026 at 3:40 pm

Andy Worthington says...

Yes, it was in September 2024, Fiona, and the future has been getting darker ever since. Why wouldn’t these monsters decide who gets to live and who dies (or is obstracized or imprisoned) when they finally have the AI programs to turn all of our lives into sortable data, essentially in the blink of an eye? People need to start taking the threat more seriously.

...on March 18th, 2026 at 3:40 pm

Andy Worthington says...

Michele Rowe wrote:

AI-War. Is this what we’ve come too? Wars with AI false (and deliberate) targets, that sees everything as collateral damage, without feelings. Led by war mongering, psychopaths and Israel and US fascist supremacy. Torture, paedophila, the weapons and fossil fuel industries and its propaganda machines that have no care for the planet other than land grabs and illegal, brutal wars.

...on March 18th, 2026 at 3:41 pm

Andy Worthington says...

That’s a succinct analysis of the situation, Michele. Good to hear from you. Now we need to act.

...on March 18th, 2026 at 3:42 pm

Andy Worthington says...

Michael Leonardi wrote, in response to 4, above:

https://www.rainews.it/amp/articoli/2026/03/peter-thiel-a-roma-per-seminari-sullanticristo-chi-e-il-controverso-magnate-pro-trump-c7b8010c-ad78-484b-863e-dc46c5a3188c.html

...on March 18th, 2026 at 3:50 pm

Andy Worthington says...

Thanks, Michael. I found a worthwhile WIRED article from last September about Peter Thiel and his long-standing antichrist obsession – ‘The Real Stakes, and Real Story, of Peter Thiel’s Antichrist Obsession’: https://www.wired.com/story/the-real-stakes-real-story-peter-thiels-antichrist-obsession/

The tagline: “Thirty years ago, a peace-loving Austrian theologian spoke to Peter Thiel about the apocalyptic theories of Nazi jurist Carl Schmitt. They’ve been a road map for the billionaire ever since.”

...on March 18th, 2026 at 3:51 pm

Andy Worthington says...

Also well worth a read – Nafeez Ahmed’s article, ‘Palantir’s Double Conflict of Interest in the War Against Iran’, for Byline Times: https://bylinetimes.com/2026/03/05/palantirs-double-conflict-of-interest-in-the-war-against-iran/

...on March 18th, 2026 at 3:52 pm

Andy Worthington says...

Janet Weil wrote:

An important article, Andy, which I will share with others. Thanks!

...on March 18th, 2026 at 8:13 pm

Andy Worthington says...

Thanks so much, Janet. I’m so glad you appreciated it.

...on March 18th, 2026 at 8:14 pm

Andy Worthington says...

Red Gilchrist wrote:

It is sick. It can destroy truth, and substitute lies & deceit & false stories, veiled as truth.

Dystopian it is, in SPADES❗️

...on March 18th, 2026 at 8:44 pm

Andy Worthington says...

Thanks for your assessment, Red. Good to hear from you.

...on March 18th, 2026 at 8:44 pm

Andy Worthington says...

Fiona Russell Powell wrote:

Andy, I had assumed that most people simply don’t understand the threat they are to us, on an existential level, actually, if you extrapolate it to the only possible interpretation of some of their future plans and current scientific and technological research. But I’ve discovered that people are more aware than I thought. The big problem is the learned helplessness that’s been installed in us since the 1970s and they’re making life harder for the “Golden Billion”, now they’re coming for us too, and so many feel exhausted and crushed by struggling to meet daily needs and have some semblance of a decent life that they have no energy left to do anything. Also little appetite for it. Sticking one’s head in the sand and hoping it will magically get better is a more comfortable position to take. I do understand but those days are over. People say “I know” (they understand the implications of nationwide digital ID and being forced to go cashless by next year, for instance) and sigh, shrug, then say “but what can we do?” I think the answer is quite simple but it would entail some short-term discomfort and a little planning. Unfortunately, we’ve become too soft and addicted to our artificial way of life, deliberately manufactured to make us so. It’s all very sad. I can’t understand why people aren’t prepared to fight tooth and nail for their kids and grandchildren to have the chance of a decent future. 😢

...on March 19th, 2026 at 9:42 am

Andy Worthington says...

Thanks for your thoughts, Fiona. That description of societal “learned helplessness” is excellent, although I tend to date it not even to the 80s, under Thatcher (although that’s when selfish greed was fully revived as a socetal driver), but to the Blair years, when hope – revived after 18 years of horrible Tory rule – was so thoroughly dashed. I regularly describe Blair as having wielded a “psychic cosh” on the British people.

The question of people knowing the dreadful future that awaits us, but doing nothing about it, is of course, as essential one, and it’s one that has consumed climate scientists and activists for decades. I can only conclude, after years of thinking about it, that it’s a combination of us being too far removed from our actual presence as part of the miraculous web of life on earth – through distractions, for which smartphones and social media seem to be particularly responsible, along with a degraded cultural environment – that people either don’t feel the collapse, or, when they glimpse it, decide to hide, even though that particular course of action is logically absurd.

...on March 19th, 2026 at 9:43 am

Andy Worthington says...

Please check out the excellent new article by Michael Leonardi for CounterPunch, ‘Palantir and the Silicon Valley Death Machine: Tech Giants Profiting from Israeli Genocide and American Empire’: https://www.counterpunch.org/2026/03/20/palantir-and-the-silicon-valley-death-machine-tech-giants-profiting-from-israeli-genocide-and-american-empire/

...on March 20th, 2026 at 11:11 pm

Andy Worthington says...

For anyone interested in AI-driven targeting in warfare, I recommend this detailed analysis by Kevin Baker of the history of the “kill chain” — the long-standing efforts by military commanders to reduce the time between finding military targets and hitting them, which have consistently failed to recognize their own weaknesses, and which have now reached their deadliest phase yet, through the use of AI, with Baker particularly focusing on the development of Maven, the US targeting system that Palantir took over in 2019.

https://artificialbureaucracy.substack.com/p/kill-chain

...on April 5th, 2026 at 10:26 am

Israel’s New World Order: Nothing But the Threat of Endless Death – Theothernews says...

[…] As I explained in an article last month, The Horrors of AI-Driven Military Targeting, From Gaza to Iran: […]

...on April 30th, 2026 at 6:24 am